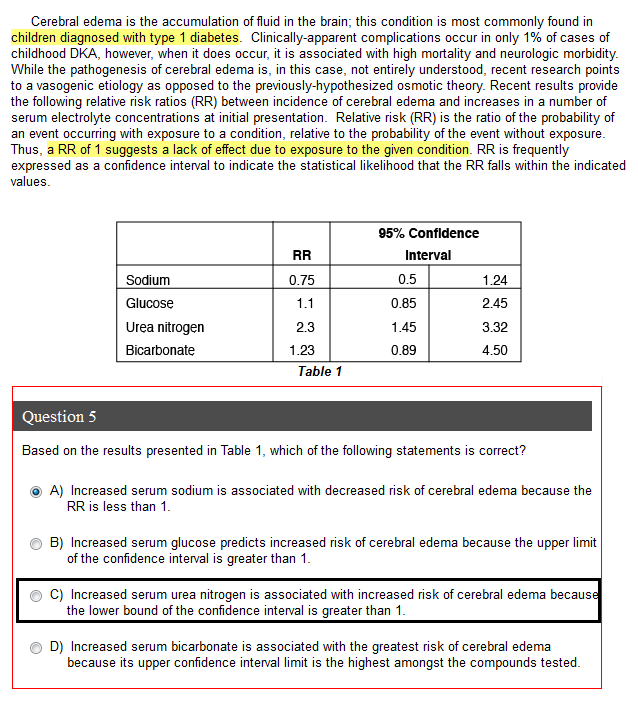

Are you familiar with error bars? That's probably the easiest way to look at this. Say you're doing an experiment on obesity. You feed rats X, Y, or Z and compare their weights to controls after 15 weeks. You plot a bar graph that shows the fold change in weight for each diet relative to controls. So control is 1.0, X is 1.4, Y is 1.2, and Z is 2.0 (made these numbers up arbitrarily). To make it clear, this means that if the control rat weighs 10 g, then the rat eating diet X weighs 1.4 grams and so on. Okay, so your bar graph will show four bars with the following heights: 1.0, 1.4, 1.2, and 2.0. Okay, can you draw any conclusion from this?

NO! You can't draw a conclusion because you don't know the error in your measurement. So I'll give you that too - this is called a confidence interval. And I'm giving you the 95% confidence interval, which is standard. This means that if you were to repeat the measurement 100 times, the true value will lie inside that interval 95 times. Okay, so X becomes 0.9 - 1.6, Y is 0.8 - 1.9, and Z is 1.5 - 2.5. So in your mind, insert those error bars into your bar graph. Here's the question: did any diet significantly alter the rat's weight?