- Joined

- Aug 3, 2007

- Messages

- 897

- Reaction score

- 577

Daphne Koller - Stanford PhD in Computer science; former chief computing officer at Google’s A.I. health initiative; and, founder of online learning platforms Corsera and Insitro, was on CNN’s ‘GPS’ with Fareed Zakaria discussing how A.I. can impact the future of healthcare.

Excerpts from the interview pertinent to our field are below.

Link to the podcast: Bill Gates & Tax Rates Fareed Zakaria GPS podcast

Zakaria: What’s the most obvious place machine learning can impact healthcare?

Koller: The most obvious application for machine learning in healthcare is on the diagnostic side. When it (A.I.) looks at a scan, etc. and predicts what the pt. has (dx.). This includes multiple sources of data including an X-ray scan, a pathology slide, or a liquid biopsy and what fragments of DNA are found in the blood.

Z: Why would the computer be better than the great doctor who’s seen a bunch of these same images and tell what the patterns look like?

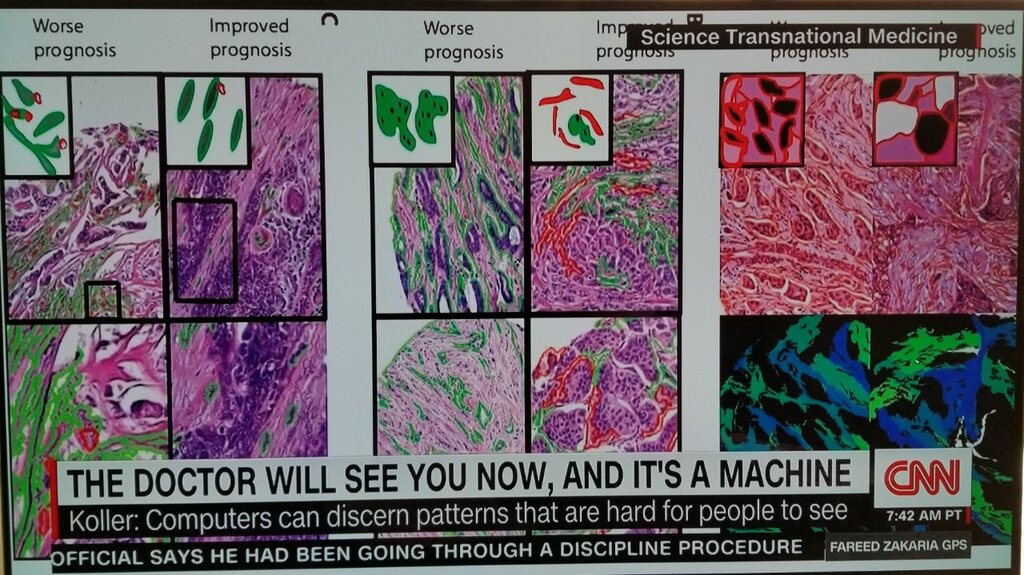

K: What’s surprising is on the imaging side where we think human beings have been looking at these for over a century, why would a computer be able to do better? Computers are able to discern subtle patterns in these images that are very hard for people to see, partly because they can look at so many different samples and extract commonalities people just can’t hold in their head.

You look at a cancer pathology which has thousands of cells and some of them are aberrant that way and others are aberrant this way, how do you put all of that together into something that corresponds to the diagnosis? But it becomes more relevant when looking at liquid biopsies when you’re looking at very subtle changes in the composition of DNA, people have no idea how to look at that data.

Zakaria concluded by saying, “I think everybody understands the computer can look at images with much greater detail and accuracy than human beings…”

I always said that it’s not a question of “If..”, but “When…” AP in its current way of being practiced will become obsolete. I guesstimated we’ve got about 50 years left. Now, I’m not so sure it’s even that much…

Excerpts from the interview pertinent to our field are below.

Link to the podcast: Bill Gates & Tax Rates Fareed Zakaria GPS podcast

Zakaria: What’s the most obvious place machine learning can impact healthcare?

Koller: The most obvious application for machine learning in healthcare is on the diagnostic side. When it (A.I.) looks at a scan, etc. and predicts what the pt. has (dx.). This includes multiple sources of data including an X-ray scan, a pathology slide, or a liquid biopsy and what fragments of DNA are found in the blood.

Z: Why would the computer be better than the great doctor who’s seen a bunch of these same images and tell what the patterns look like?

K: What’s surprising is on the imaging side where we think human beings have been looking at these for over a century, why would a computer be able to do better? Computers are able to discern subtle patterns in these images that are very hard for people to see, partly because they can look at so many different samples and extract commonalities people just can’t hold in their head.

You look at a cancer pathology which has thousands of cells and some of them are aberrant that way and others are aberrant this way, how do you put all of that together into something that corresponds to the diagnosis? But it becomes more relevant when looking at liquid biopsies when you’re looking at very subtle changes in the composition of DNA, people have no idea how to look at that data.

Zakaria concluded by saying, “I think everybody understands the computer can look at images with much greater detail and accuracy than human beings…”

I always said that it’s not a question of “If..”, but “When…” AP in its current way of being practiced will become obsolete. I guesstimated we’ve got about 50 years left. Now, I’m not so sure it’s even that much…

Last edited: