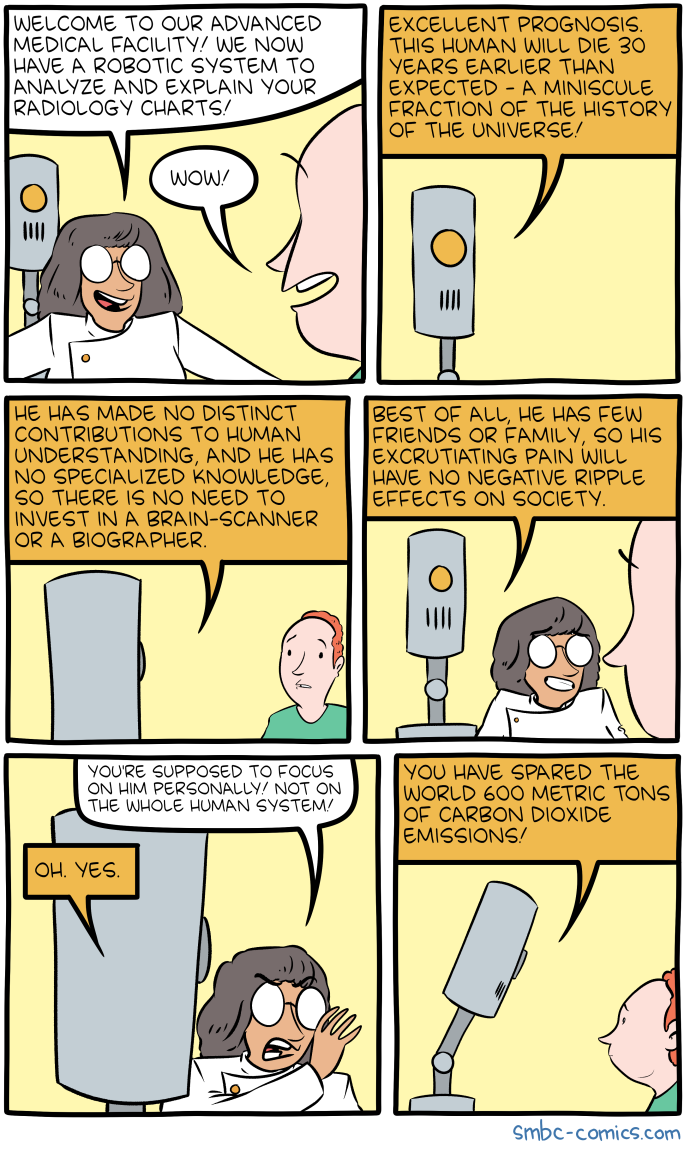

There is a lot of hype around AI and ML right now and major hospitals, universities and tech companies have already started pairing off/partnering up with big industry players like IBM and GE to name a few. There’s also no shortage of articles about how AI has outperformed a pathologist or a dermatologist on diagnosing early cancer. Or how pulmonary nodules can be detected within seconds on Chest CTs using current ML techniques. Does that mean that the radiologists’ days are numbered? Will radiologists eventually be replaced by machines?

In short, NO. Here are my opinions on why not:

1. I know this is cynical, but you can’t sue a computer. The lawyers will need someone to blame when an incidental 2mm pulmonary nodule (which has a <1% risk of cancer) turns out to be cancer 5 years later. Who will take the liability? Certainly not IBM or GE. They wouldn’t be in this game if they opened themselves up to litigation like that. Moreover, if you got a CT done and the doctor told you “computer says it’s normal,” would you say ok or would you want to make sure that a human being looked at it? Even worse, “computer says it’s cancer, we’re going to admit you and prep for surgery,” wouldn’t you want some person to look at that?

2. While these algorithms are powerful, they are also very brittle. For example, the slightest amount of noise in an image can trick Google’s AI into thinking that 4 machine guns are a helicopter. There needs to be quite a few breakthroughs if we expect an AI to reliably call pathology on a radiology exam. I think AIs will probably get very good at calling a normal study, normal. But for it to differentiate pancreatitis, a pancreatic head mass, and focal atrophy of the pancreatic body (which gives the illusion of a panc head mass) is going to be tough to do. AIs will probably just flag studies for us, do some preliminary interpretation and we’ll put the final approval on it.

3. The amount of data needed to validate the algorithms is crazy. You need maybe 100,000 of examples minimum of appendicitis to create an AI that can call it well. Half of that is used to train the algorithm and the other half is used to validate/test it. Let’s say you use a technique called “transfer learning” to offset that big uphill of cases needed, you still need maybe 10,000 examples of appendicitis. That’s actually doable. But, try finding 10,000 cases of Rhombencephalosynapsis or some other rare disease. There’s just not that many cases of rare diseases for us to create and train algorithms on. That’s why you need a radiologist to find that Zebra sitting in a herd of horses.

4. Many of these “ground-breaking” algorithms and ML concepts existed in the 70s and 80s. It’s just the computing power that is the transformative factor today, but even that will have its saturation point. You can only fit so many transistors onto a microchip. Moore’s Law (the observation that the number of transistors in a dense integrated circuit doubles about every two years) is actually slowing down and at some point, we may saturate before achieving the computing power needed for a decent radiology AI.

I know it’s coming, but it’s not coming to replace us. It’s coming to transform our space and ultimately help us. AI is good at repetitive tasks in a very narrow range. If it can remove some of the mundane in my every day, I’m all for it.

In short, NO. Here are my opinions on why not:

1. I know this is cynical, but you can’t sue a computer. The lawyers will need someone to blame when an incidental 2mm pulmonary nodule (which has a <1% risk of cancer) turns out to be cancer 5 years later. Who will take the liability? Certainly not IBM or GE. They wouldn’t be in this game if they opened themselves up to litigation like that. Moreover, if you got a CT done and the doctor told you “computer says it’s normal,” would you say ok or would you want to make sure that a human being looked at it? Even worse, “computer says it’s cancer, we’re going to admit you and prep for surgery,” wouldn’t you want some person to look at that?

2. While these algorithms are powerful, they are also very brittle. For example, the slightest amount of noise in an image can trick Google’s AI into thinking that 4 machine guns are a helicopter. There needs to be quite a few breakthroughs if we expect an AI to reliably call pathology on a radiology exam. I think AIs will probably get very good at calling a normal study, normal. But for it to differentiate pancreatitis, a pancreatic head mass, and focal atrophy of the pancreatic body (which gives the illusion of a panc head mass) is going to be tough to do. AIs will probably just flag studies for us, do some preliminary interpretation and we’ll put the final approval on it.

3. The amount of data needed to validate the algorithms is crazy. You need maybe 100,000 of examples minimum of appendicitis to create an AI that can call it well. Half of that is used to train the algorithm and the other half is used to validate/test it. Let’s say you use a technique called “transfer learning” to offset that big uphill of cases needed, you still need maybe 10,000 examples of appendicitis. That’s actually doable. But, try finding 10,000 cases of Rhombencephalosynapsis or some other rare disease. There’s just not that many cases of rare diseases for us to create and train algorithms on. That’s why you need a radiologist to find that Zebra sitting in a herd of horses.

4. Many of these “ground-breaking” algorithms and ML concepts existed in the 70s and 80s. It’s just the computing power that is the transformative factor today, but even that will have its saturation point. You can only fit so many transistors onto a microchip. Moore’s Law (the observation that the number of transistors in a dense integrated circuit doubles about every two years) is actually slowing down and at some point, we may saturate before achieving the computing power needed for a decent radiology AI.

I know it’s coming, but it’s not coming to replace us. It’s coming to transform our space and ultimately help us. AI is good at repetitive tasks in a very narrow range. If it can remove some of the mundane in my every day, I’m all for it.